Scraping competitor data is a powerful way to gather insights, but it comes with legal and ethical boundaries. Here's what you need to know to stay compliant:

- Public data is fair game: If data is accessible without logging in, such as prices or store locations, scraping it is generally legal.

- Avoid personal or copyrighted data: Names, emails, and creative content are protected under laws like GDPR and copyright regulations.

- Respect website rules: Check for restrictions in the site's Terms of Service and robots.txt file.

- Don't bypass barriers: Circumventing CAPTCHAs, login walls, or rate limits can lead to legal trouble.

- Use official APIs when available: APIs provide authorized access to data and reduce compliance risks.

Legal frameworks vary by region. For example, the U.S. allows scraping public data under the CFAA, but bypassing technical safeguards may be prohibited. In the EU, GDPR imposes strict rules on personal data collection. Always stay informed about local laws and review your scraping practices regularly.

The 2024 hiQ Labs v. LinkedIn case reaffirmed that accessing public data doesn’t violate U.S. anti-hacking laws, but creating accounts or ignoring technical safeguards can lead to penalties. To scrape responsibly, use compliant tools, implement rate limits, and document your activities. Ethical scraping protects your business while maintaining access to valuable insights.

Is Web Scraping Legal? | Industry Expert Interview

sbb-itb-ea6392c

Legal Framework for Data Scraping

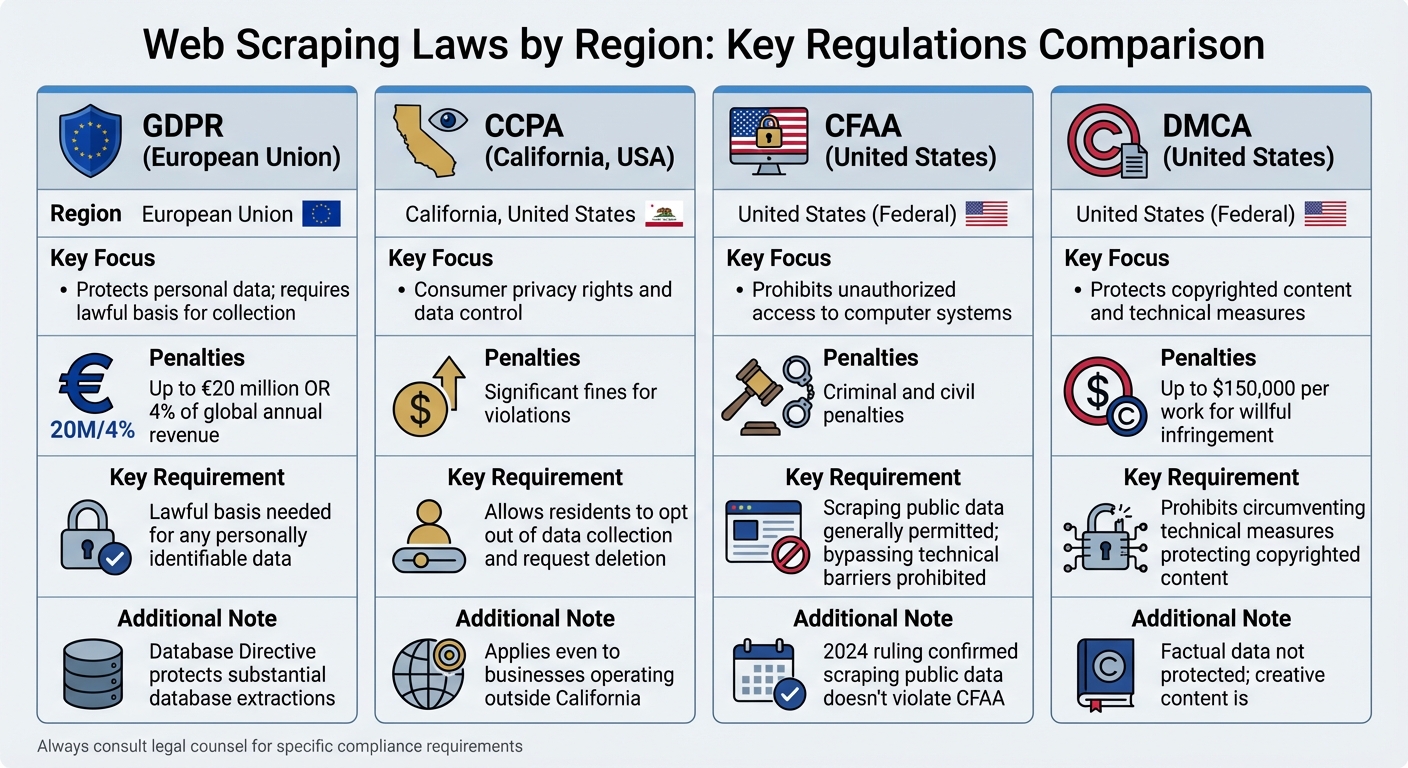

Web Scraping Laws and Regulations by Region: GDPR, CCPA, CFAA, and DMCA Comparison

What Makes Scraping Legal or Illegal

The legality of data scraping depends on three key factors: the type of data being scraped, how you access it, and how the data is used afterward.

The distinction between public and private access is critical. If the data is publicly visible - like product prices, store locations, or publicly accessible social media posts - scraping it typically does not violate anti-hacking laws like the Computer Fraud and Abuse Act (CFAA). A U.S. Federal court ruling in January 2024 sided with Bright Data in its case against Meta, affirming that scraping publicly available data from platforms like Facebook and Instagram does not breach the CFAA.

However, creating an account changes the legal landscape. Agreeing to a website’s Terms of Service during registration makes those terms legally binding. For example, in the hiQ Labs v. LinkedIn case, LinkedIn accused hiQ of using fake accounts to bypass its protections. The case was eventually settled in 2022, highlighting the complexities of scraping from behind a login.

The type of data being scraped is another major factor. Factual data, such as prices or product names, is not protected by copyright laws. On the other hand, creative content - like articles, photos, or the unique structure of a database - may be copyright-protected. Scraping personal data, such as names, email addresses, or IP information, without consent can trigger strict regulations under laws like GDPR and CCPA. In the U.S., copyright violations can result in fines of up to $150,000 per work, while GDPR penalties can reach €20 million or 4% of a company’s global annual revenue.

Before starting any scraping project, consider these questions: Are you scraping personal data? Are you scraping copyrighted material? Are you accessing data behind a login? If the answer to any of these is "yes", a thorough legal review is essential. Keep in mind that legal requirements can vary significantly by region, as discussed below.

Scraping Laws by Country and Region

While the general principles of scraping legality remain consistent, regional laws add layers of complexity. Understanding these differences is critical to ensuring compliance.

In the United States, the CFAA governs "unauthorized access" to computer systems. Courts generally agree that scraping publicly available data does not qualify as unauthorized access. However, bypassing technical barriers or violating a website’s Terms of Service could result in CFAA violations. The Digital Millennium Copyright Act (DMCA) also comes into play, as it prohibits bypassing technical measures designed to protect copyrighted content.

In the European Union and the UK, the focus is on personal data protection under the General Data Protection Regulation (GDPR). GDPR requires a lawful basis for collecting any data that can identify individuals. Additionally, the EU’s Database Directive protects website owners from having substantial portions of their databases extracted, even if the data is publicly accessible.

California’s CCPA gives residents the right to opt out of data collection and request the deletion of their personal information. These rights apply even if a business operates outside of California.

| Law/Regulation | Region | Key Focus |

|---|---|---|

| GDPR | European Union | Protects personal data; requires lawful basis for collection |

| CCPA | California, USA | Allows residents to opt out of data collection and request deletion |

| CFAA | United States | Prohibits unauthorized access to computer systems |

| DMCA | United States | Regulates circumvention of technical measures for copyrighted works |

The Ryanair v. PR Aviation case highlights how interpretations can vary by region. A Dutch court ruled that PR Aviation was allowed to scrape flight prices since Ryanair hadn’t explicitly bound visitors to their terms. These regional differences make it essential to understand local laws before diving into technical safeguards like robots.txt files.

Robots.txt Files and Anti-Bot Systems

Beyond legal regulations, technical protocols also play a role in compliant scraping. The robots.txt file acts as a website’s guide for automated systems, specifying which parts of the site are off-limits for crawling. This file is typically located at website.com/robots.txt. While ignoring robots.txt may not always be illegal for publicly accessible data, it can be used as evidence of bad faith or unethical conduct in legal disputes.

Respecting technical barriers is equally important. Anti-bot measures like CAPTCHAs, IP blocking, and rate limiting are designed to control access. Circumventing these defenses could turn an otherwise lawful scraping activity into a case of unauthorized access under laws like the CFAA.

"The key distinction lies in how you scrape and what you scrape, not the act of scraping itself." – Kevin Sahin, Co-founder, ScrapingBee

Excessive requests can also lead to claims of Denial of Service (DoS), which are illegal in many jurisdictions. Even if criminal laws are not violated, civil claims such as trespass to chattels could arise if automated requests harm a website’s server performance.

To stay compliant, always check the robots.txt file before scraping, use a clear User-Agent string to identify your bot, and implement rate limiting by spacing requests 1–2 seconds apart. Watch for "429 Too Many Requests" errors and respect "Retry-After" headers to avoid overloading servers. Following these practices not only demonstrates good faith but also minimizes the risk of legal issues and IP bans.

How to Evaluate Target Websites and Data Types

To build a compliant web scraping framework and steer clear of legal trouble, it's essential to assess target websites for data accessibility and potential legal risks. Here's a closer look at how to approach this process.

How to Identify Publicly Available Data

The first step is determining whether the data you want to scrape is publicly accessible. Public data is generally defined as information that can be accessed without requiring a login, password, or payment.

"The CFAA concept of 'without authorization' simply does not apply to public websites." - Ninth Circuit Court, HiQ v. LinkedIn Judgment

Pay attention to how terms and conditions are presented on the website. Terms hidden in footer links (known as "browsewrap") are less enforceable compared to "clickwrap" agreements, which require users to actively agree. For instance, in January 2024, a U.S. Federal court sided with Bright Data in a case against Meta, ruling that scraping publicly accessible data from Facebook and Instagram - without bypassing login walls - does not violate the Computer Fraud and Abuse Act (CFAA).

Another critical step is verifying whether the data contains Personal Identifiable Information (PII) or copyrighted materials. Under the EU's DSM Directive, website owners must signal their opt-out preferences for data mining in a machine-readable format, often through the robots.txt file. Make it a habit to check example.com/robots.txt for "Disallow" directives, which indicate restrictions on automated tools.

Once you've identified public data, it's time to evaluate the types of data that may carry higher legal risks.

Data Types to Avoid Scraping

Some categories of data come with significant legal challenges and should generally be avoided. For example:

- Personal data: This includes names, email addresses, phone numbers, home addresses, IP addresses, and birth dates. Even if this information is visible on social media profiles, scraping it without legal justification could lead to hefty penalties under GDPR or CCPA - up to €20 million or 4% of global annual revenue.

- Sensitive personal information: Health records, biometric data, and financial details are subject to even stricter regulations.

- Copyrighted content: Extracting full articles, videos, music, or proprietary images without permission can result in statutory damages of up to $150,000 per work if deemed willful infringement.

- Data behind logins or paywalls: Accessing such data is typically prohibited due to binding Terms of Service (ToS) agreements.

Here's a quick breakdown of legal risks by data type:

| Data Category | Legal Risk Level | Key Consideration |

|---|---|---|

| Public Factual Data (e.g., prices, weather) | Low | Generally permissible if not restricted by login |

| Personal Data (PII) | High | Requires compliance with GDPR/CCPA; lawful basis needed |

| Copyrighted Content | Medium | Dependent on "fair use" and intended usage |

| Data Behind Login | High | Restricted by ToS agreed to during registration |

After understanding the data categories, the next step is to carefully examine the website's policies.

How to Review Website Policies

Before moving forward, scrutinize the website’s Terms of Service (ToS) and privacy policies. Take note of whether account creation is required. If you’ve registered for an account, you’ve likely agreed to the ToS, making them enforceable.

Search the ToS for keywords like "automated access", "scraping", "crawling", "bots", or "data mining." These terms will reveal whether the website explicitly prohibits such activities. Platforms like Amazon, Google, and eBay are increasingly aggressive in enforcing ToS violations related to unauthorized scraping of product data and reviews.

If the website offers an official API, consider using it to ensure lawful access to data. If the ToS language is ambiguous, reach out to the site owner for clarification or inquire about purchasing a commercial data feed. To protect yourself, document your compliance efforts by saving copies of the ToS and privacy policy as they existed at the time of scraping.

Building a Compliant Scraping Infrastructure

Once you've ensured the legality of your data collection, the next step is to set up a technical infrastructure that allows you to scrape responsibly. A well-constructed system helps you avoid IP bans, respect server limits, and adhere to ethical practices throughout the process.

Choosing Proxy Solutions for Compliant Scraping

Proxies play a key role in distributing requests, preventing server overload, and staying within rate limits.

- Residential proxies are highly trusted and work well with sites employing strong anti-bot defenses.

- ISP proxies combine the reliability of residential proxies with the speed of datacenter proxies, making them ideal for high-frequency, persistent sessions.

- Datacenter proxies are fast and cost-effective but are more prone to being blocked.

- Mobile proxies offer the highest trust levels but come with slower speeds and higher costs, making them suitable for heavily protected sites.

Here’s a quick comparison of proxy types:

| Proxy Type | Trust Score | Speed/Reliability | Cost | Best Use Case |

|---|---|---|---|---|

| Datacenter | Low | High | Low | Public data with basic rate limits |

| Residential | High | Moderate | High | Protected e-commerce and social media sites |

| ISP (Static) | High | High | Moderate | High-scale scraping requiring persistent sessions |

| Mobile | Highest | Low | Highest | Highly protected targets with aggressive anti-bot measures |

When choosing a proxy provider, look for services that source residential IPs ethically and maintain success rates above 95%.

For professional needs, BirdProxies offers both residential and ISP proxy solutions. Their ISP proxies provide dedicated static IPs with low latency and competitive pricing. Residential proxies, on the other hand, feature rotating IPs with global coverage and pay-per-GB pricing starting at $3.50 per GB. Both options include instant activation, a 24/7 dashboard, and free replacements for banned proxies.

Rotation strategies are also essential. Per-request rotation, which changes IPs with every request, provides maximum anonymity and is perfect for large-scale data collection. Meanwhile, session-based rotation keeps an IP active for 1–10 minutes, which works well for sites requiring cookies or temporary authentication.

Lastly, integrate retry logic with exponential backoff to handle rate limits effectively.

Using APIs Instead of Scraping

When proxies aren’t enough, consider using APIs for data collection. Official APIs, when available, are the most straightforward and compliant way to access data. They are explicitly authorized by the website owner and often come with clear usage terms and rate limits. For example, the Google Maps API uses a usage-based pricing model that scales with request volume. While APIs may involve costs, they eliminate legal uncertainties and reduce the technical burden of parsing HTML.

If an API doesn’t provide all the data you need, consider reaching out to the website owner about purchasing a commercial data feed or expanded API access. When no official API exists, managed web scraping APIs can be a viable alternative. These services handle proxy rotation, CAPTCHA solving, and browser fingerprinting automatically, usually through credit-based pricing models ranging from $20 to $100 per month.

Anti-Bot Evasion Techniques That Stay Compliant

Modern anti-bot systems rely on behavioral analysis, such as tracking mouse movements and browsing speed, to detect bots. To scrape responsibly while avoiding detection, it’s important to mimic human behavior without crossing ethical or legal boundaries.

Start by respecting robots.txt directives. While not legally binding, robots.txt files indicate which paths are off-limits to automated crawlers and often include crawl-delay instructions.

"The robots.txt file is not a lawful enforcement but a voluntary compliance".

Parse the robots.txt file to ensure compliance.

Other best practices include:

- Randomizing intervals: Introduce 2–6 second delays between requests to mimic natural browsing and prevent server overload.

- Rotating User-Agent strings: Use a variety of User-Agent strings from browsers like Chrome, Firefox, and Safari to avoid detection.

- Using headless browsers: For JavaScript-heavy sites, tools like Puppeteer or Playwright can render pages and simulate real user interactions, such as mouse movements and clicks.

Set up automated monitoring for HTTP response codes. Alerts for spikes in 403 (Forbidden) or 429 (Too Many Requests) responses can help you adjust rotation frequencies or switch proxy types if blocking occurs.

Lastly, practice data minimization - scrape only the specific HTML elements needed for your project. If personal data is unintentionally collected, anonymize or delete it immediately to comply with privacy regulations like GDPR and CCPA.

"Data collection and web scraping is as ethical as the person doing it... ethical web scraping is a decision".

Best Practices for Ethical Data Scraping

Setting up a compliant infrastructure is only part of the equation. The way you operate your scraper on a daily basis is what truly determines whether you stay within ethical and legal boundaries.

Respecting Rate Limits and Server Load

One of the most important ethical principles in web scraping is avoiding server overload. Sending too many requests in a short period can disrupt normal server operations and inconvenience legitimate users.

- Watch for HTTP status codes. A

429 Too Many Requestsresponse is a clear signal that you've exceeded the server's rate limit. Pay attention to theRetry-Afterheader, which often specifies when you can resume requests. Ignoring this can lead to IP bans. - Randomize request intervals. Mimic human browsing behavior by varying delays between requests, ideally between 2–6 seconds. Fixed intervals are easier for anti-bot systems to detect.

- Scrape during off-peak hours. High-volume scraping should be scheduled when server traffic is naturally lower. For example, if you're targeting a U.S.-based site, consider running your scraper between 1:00 AM and 5:00 AM EST rather than during business hours.

- Use exponential backoff for retries. If a request fails or times out, wait progressively longer before retrying - 1 second, then 2 seconds, then 4 seconds, and so on. This approach reduces the strain on overloaded servers.

Documenting Your Scraping Activities

Once your infrastructure is in place, thorough documentation adds another layer of ethical accountability. Keeping detailed records can demonstrate your commitment to responsible practices.

- Track key metrics. Monitor HTTP response codes, response times, and page integrity. For example, a surge in 403 (Forbidden) responses or unusually fast 200 OK responses with incomplete content may indicate that your scraper is being flagged or served decoy data.

- Be transparent with your user-agent string. Instead of pretending to be a standard browser, use a descriptive string like

MyCompanyBot/1.0 (+https://yourcompany.com/bot-info). This allows website owners to contact you with concerns instead of blocking your scraper outright. - Keep records of legitimate interest analysis. If you're operating in the EU, document how your data collection aligns with lawful business purposes while addressing privacy concerns. Include details about which data you're collecting, why it's needed, and how you're protecting sensitive information.

- Develop an ethical checklist. For every project, outline key steps such as analyzing the target website, verifying robots.txt compliance, setting rate limits, minimizing data collection, and defining the business purpose. This structured process reinforces your ethical approach.

Using Scraped Data for Lawful Purposes

The way you use scraped data is just as important as how you collect it. Courts tend to view scraping more favorably when the data is used for transformative purposes rather than to replicate or compete with the original source.

"The information was used to create a transformative product and was not used to steal market share from the target website by luring away users or creating a substantially similar product." - Amber Zamora, Proposed Ethical Scraper Guidelines

- Focus on internal use. Prioritize using scraped data for internal analysis and business intelligence rather than redistributing it.

- Anonymize personal data immediately. If you accidentally collect personally identifiable information (PII), replace it with generic placeholders like

user1or mask sensitive details such as email addresses and phone numbers. Mishandling PII can lead to severe penalties under regulations like GDPR and CCPA. - Avoid copyrighted material. Don't scrape and republish protected content like product descriptions, blog posts, or images without permission. If you need such data, explore licensing options to stay compliant. Copyright violations can result in statutory damages of up to $150,000 per work.

- Respect paywalls and authentication systems. Scraping content behind login walls or paywalls often violates the Terms of Service you agreed to when creating an account. Such actions can be considered a breach of contract, even if the data itself isn't private.

Maintaining Compliance and Managing Risk

Building a scraper is just the beginning. Keeping it compliant requires constant monitoring and adjustments to stay within legal boundaries. This isn't a "set it and forget it" situation - it's an ongoing process.

Tracking Changes in Terms of Service

Websites frequently update their Terms of Service and anti-scraping defenses without warning. These changes can be subtle, like tweaks to class names, IDs, or layouts, but they often signal stricter policies. Regularly review site structures and use automated alerts to catch these shifts early. For instance, a sudden spike in HTTP response codes like 403 (Forbidden) or 429 (Too Many Requests) might indicate new rate limits or anti-scraping measures.

It's especially important to monitor Terms of Service when your scraper requires login credentials. Agreeing to these terms - often through a "clickwrap" agreement where users actively click "I agree" - creates a binding contract that courts are more likely to enforce.

Staying vigilant about these changes lays the groundwork for handling potential issues effectively.

How to Respond to Cease-and-Desist Notices

If you receive a cease-and-desist notice, don’t panic, but act quickly. This doesn't automatically mean you've broken the law, but it does require immediate attention. Pause all scraping activities on the affected site while your legal and technical teams assess the situation. The notice might cite issues like violations of the Computer Fraud and Abuse Act (CFAA), copyright infringement, or breach of contract.

Bring in legal experts who specialize in data scraping compliance to review the claim. A key factor is whether your scraper explicitly agreed to the site's Terms of Service through a "clickwrap" agreement, which courts take more seriously than "browsewrap" agreements that are simply linked in a footer.

"Where there is no authorization required in the first place, there is nothing to withdraw from later. The CFAA concept of 'without authorization' simply does not apply to public websites." - Ninth Circuit Court Ruling (HiQ v. LinkedIn)

Ignoring a legal notice is not an option. Consider the case of Meta Platforms, Inc. suing Social Data Trading Ltd. in December 2021 for scraping Instagram and Facebook data. The defendant failed to respond, resulting in a default judgment in Meta's favor.

If personal data is involved, assess whether your practices comply with regulations like GDPR or CCPA. To reduce risk, consider anonymizing or descoping personal data immediately. Additionally, check if the website provides an official API. Switching to an authorized data source can resolve legal issues and offer more stable access in the long run.

Scheduling Regular Legal Reviews

Compliance isn’t just about reacting to changes - it’s about staying ahead of them. Laws around data scraping are constantly evolving, shaped by new legislation and court rulings. Regular legal reviews are essential to avoid costly mistakes. Under GDPR, for example, fines can reach €20 million or 4% of global revenue, while copyright violations can cost up to $150,000 per work.

Before targeting a new site, evaluate it against a compliance checklist. Determine whether the data is public, personal, or copyrighted. Keep an eye on Terms of Service, as even "browsewrap" agreements can become enforceable if prominently displayed. Program your scrapers to collect only what’s necessary and delete personal data promptly after processing to align with privacy regulations.

"What you scrape today might be acceptable - but tomorrow, it could cross an ethical or legal line. Regularly re-evaluate the privacy and copyright implications of your scraping targets to ensure continued compliance." - Nicolas Rios, Head of Product, Abstract API

Work closely with legal professionals who understand the nuances of the CFAA, GDPR, and other privacy laws. The regulatory environment is tightening worldwide, with updates to CCPA in California, LGPD in Brazil, and the upcoming EU AI Act in 2024. Staying proactive with regular reviews ensures you’re prepared for these changes.

Conclusion

Legal competitor data scraping operates within the boundaries of public access, providing valuable insights without crossing ethical or legal lines. The distinction between lawful and unlawful scraping hinges on three key factors: whether the data is publicly accessible, the methods used to extract it, and how the collected data is utilized. To remain on the right side of the law, focus on publicly available information, avoid bypassing login barriers, and carefully review technical guidelines before starting.

The regulatory environment is becoming stricter, and penalties for violations can be severe. Courts are increasingly scrutinizing how scrapers interact with website Terms of Service, particularly when clickwrap agreements are in place. Compliance isn’t just advisable - it’s essential for maintaining a sustainable and lawful business.

Ethical considerations are equally important as legal ones in this tightening landscape.

"Ethical scraping involves respecting the website owner's rights and preferences regarding their data." - Roborabbit

Building a reliable infrastructure is critical for implementing ethical and compliant scraping practices. This includes using tools that support IP rotation, simulate human behavior, and avoid IP blocks without triggering anti-bot defenses. Professional proxy solutions, such as BirdProxies, offer rotating IPs, global reach, and instant activation, enabling businesses to extract insights effectively while minimizing bans and legal exposure.

As the data landscape evolves, combining scalable, compliant tools with ongoing legal vigilance is essential for staying competitive. The web scraping industry, valued at $4 billion in 2022, is expected to quadruple by 2035. With competition intensifying and anti-bot technologies advancing, businesses that prioritize compliance and invest in robust tools will position themselves for long-term success.

FAQs

What are the legal risks of scraping data from password-protected websites?

Scraping data from websites that require login credentials carries considerable legal risks. Many sites clearly state in their terms of service that automated access to members-only sections is not allowed. Ignoring these terms could result in a breach of contract and possibly lead to civil lawsuits.

Accessing password-protected areas without proper authorization might also violate the Computer Fraud and Abuse Act (CFAA), exposing you to potential civil and criminal penalties. Moreover, gathering personal information, such as email addresses or purchase records, without user consent could infringe on privacy laws like the California Consumer Privacy Act (CCPA) or GDPR, especially if the data involves users from the EU. Non-compliance with these laws could lead to hefty fines or enforcement actions.

To mitigate these risks, always obtain explicit permission, consult with legal professionals, and ensure your actions align with relevant laws and the website’s policies before attempting to scrape protected content.

How can I legally scrape data while complying with GDPR and CCPA regulations?

To keep your data scraping practices in line with GDPR and CCPA regulations, the first step is to identify any personal information you might collect. This could include names, email addresses, IP addresses, or location data. If your scraping involves personal data, you’ll need to either get explicit consent from users or establish a lawful basis for processing, such as legitimate interest. Be sure to document your process and maintain transparency by providing a clear privacy notice. This notice should explain what data you collect, why you need it, and how users can exercise their rights.

Limit the scope of your scraping to only what’s absolutely necessary, and whenever possible, anonymize or pseudonymize the data you collect. Protect all information with strong encryption and strict access controls. For CCPA compliance, you’ll also need to be ready to handle requests like “Do Not Sell My Personal Information” and provide California residents with details about the data you collect and how it’s used.

Using a trusted proxy service like BirdProxies can help you navigate challenges such as accessing restricted website sections or avoiding blocks. BirdProxies offers high-trust ISP and residential proxies with clean IP reputations, which can ensure safer and more compliant scraping. Just make sure your proxy provider operates as a data processor and adheres to GDPR and CCPA standards as part of their terms of service. By implementing these measures, you can confidently execute legal and ethical data scraping projects.

What are the best practices for using proxies legally and ethically in web scraping?

To gather data responsibly and within legal boundaries, start by choosing a reliable proxy provider that offers top-tier IP options, such as residential, ISP, or rotating proxies. These proxies help simulate real user behavior, making it less likely to trigger anti-bot defenses. Regularly rotating IPs is essential to avoid bans, while static or sticky proxies are better suited for tasks that require consistent sessions, like logging into accounts.

It’s crucial to stick to ethical and legal practices: only scrape data that is publicly accessible, respect the website’s robots.txt file and terms of service, and ensure your request patterns mimic typical human behavior. Adding short delays between requests and randomizing user-agent strings can further demonstrate responsible scraping practices.

A premium service like BirdProxies can support these efforts with features such as fast rotating proxies, geo-targeted IPs, and real-time monitoring. Pairing high-quality proxies with ethical scraping techniques allows you to collect competitor data effectively while staying on the right side of the law.