Want to scrape inventory data effectively without getting blocked? Here's the deal: e-commerce platforms are designed to detect and block automated tools. But proxies can help you bypass these defenses by masking your IP address and distributing requests across multiple locations.

To succeed, you need the right proxy setup, clear planning, and smart configurations. Here's a quick overview:

- Plan Ahead: Define the exact data you need (SKUs, prices, stock levels) and ensure compliance with legal and ethical guidelines like GDPR.

- Choose the Right Proxies: Use residential proxies for harder-to-access sites, and datacenter proxies for simpler tasks. Match proxy locations to your target region for accurate results.

- Optimize Your Setup: Rotate IPs, manage session persistence, and mimic human browsing patterns by randomizing delays and headers.

- Handle Errors Smartly: Rotate proxies or adjust delays when you encounter errors like rate limits (HTTP 429) or access blocks (HTTP 403).

- Ensure Data Quality: Validate fields regularly, monitor site changes, and use tools like headless browsers for dynamic content.

Key Tip: Always monitor proxy performance and costs. Using the right proxy type for the right task can save you time and money.

This guide breaks down everything from pre-scraping prep to long-term proxy management, ensuring your scraping efforts stay efficient, compliant, and effective.

Web Scraping with Professional Proxy Servers in Python

Pre-Scrape Planning Checklist

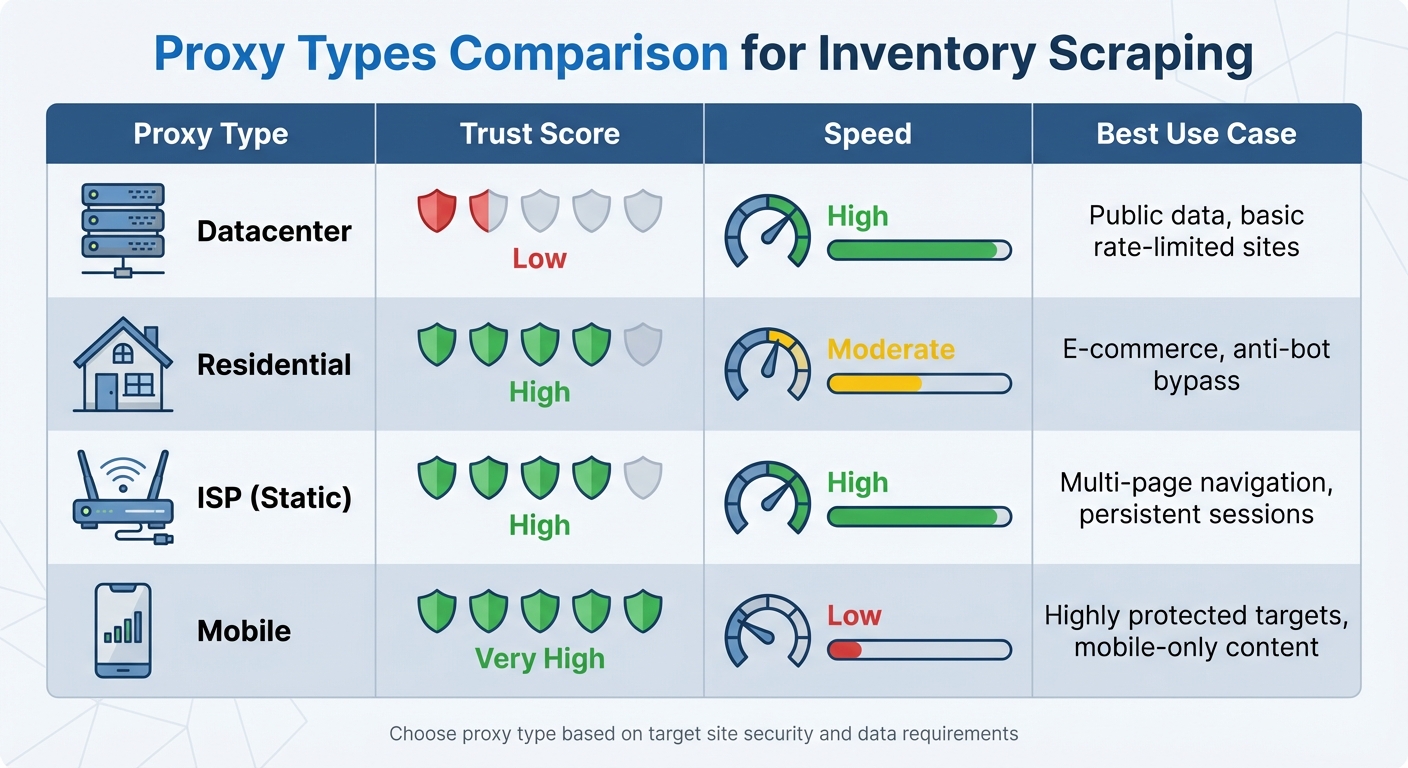

Proxy Types Comparison for Inventory Scraping: Trust, Speed, and Use Cases

Define Your Inventory Data Requirements

Start by outlining the essential inventory fields you'll need, such as SKUs, prices, price changes, and stock availability. For deeper insights, think about collecting secondary data like customer reviews, product trends, and new product launches. These additional fields can give you a competitive advantage.

Next, identify the types of web pages you’ll be scraping - whether it's product detail pages, category listings, or search results pages. Each type requires specific technical selectors, so take the time to map out each field with the right selectors. Make sure to use complete, real-world URLs instead of simplified links with just product IDs.

With your data requirements clearly defined, ensure you're aware of the legal boundaries to keep your scraping operations secure and compliant.

Review Legal and Ethical Constraints

Before scraping, review the website's robots.txt file and Terms of Service to identify disallowed pages and any crawl-delay rules. Ignoring these guidelines can lead to legal risks.

Be mindful of privacy laws like GDPR (in Europe) and CCPA (in the US). To stay compliant, avoid collecting any personally identifiable information (PII). Stick to publicly accessible data such as product prices, stock levels, and specifications. Keep in mind that scraping data from behind a login often violates the Terms of Service you agreed to when creating an account.

Once you've established these boundaries, you can move on to selecting the right proxies for your scraping needs.

Select Proxy Types and Locations

Choose proxies based on your data requirements and the security measures of the target site. For websites with strong anti-bot systems, opt for residential or ISP proxies, which are better at bypassing advanced defenses. While datacenter proxies are faster and more affordable, they are easier to detect due to their identifiable IP ranges. ISP proxies, on the other hand, strike a balance by combining datacenter speed with the trustworthiness of residential IPs, making them ideal for navigating multi-page inventory setups.

It's also crucial to match your proxy location to the website's target region. For example, if you're gathering pricing data for the US market, use US-based proxies. This helps you avoid being flagged as "suspicious traffic" and ensures accurate location-specific pricing. Providers like BirdProxies offer both ISP and residential proxies with multiple geo-locations, including the US, UK, Germany, and France - perfect for tasks requiring regional precision. For sites with aggressive anti-scraping measures, rotate proxies every 1-10 requests. For less restrictive sites, a rotation every 50-100 requests works well.

| Proxy Type | Trust Score | Speed | Best Use Case |

|---|---|---|---|

| Datacenter | Low | High | Public data, basic rate-limited sites |

| Residential | High | Moderate | E-commerce, anti-bot bypass |

| ISP (Static) | High | High | Multi-page navigation, persistent sessions |

| Mobile | Very High | Low | Highly protected targets, mobile-only content |

Proxy Configuration Checklist

IP Rotation and Session Management

How you manage IP rotation depends on the type of proxies you're using. Back-connecting proxies handle this automatically, cycling through IPs via a single endpoint. On the other hand, script-level rotation gives you direct control, allowing you to rotate through a list of proxy IPs within your code.

For tasks like inventory scraping, residential proxies are usually the go-to option. These proxies use IP addresses assigned by ISPs to actual households, making them much harder for detection systems to flag compared to datacenter IPs. When dealing with multi-page processes - like logging into accounts or completing checkout flows - sticky sessions are invaluable. They let you maintain the same IP address for a set period, ensuring your traffic doesn’t appear as if it’s coming from a new user with every request.

"If possible, prefer sticky sessions (keeping the same IP for a short duration when needed) for tasks like multi-page navigation or logins, but still rotate IPs periodically to avoid long-term profiling." - Datahut

Consistency is key. Keep your headers, cookies, and TLS fingerprints aligned throughout each session. Any mismatch can signal suspicious activity to web application firewalls, leading to blocks.

For example, BirdProxies offers both rotating residential proxies and dedicated ISP proxies across multiple geo-locations. This flexibility allows you to fine-tune your proxy setup to match your target region seamlessly.

Rate Limiting and Concurrency

Once you’ve nailed down your rotation strategy, the next step is managing your request flow to mimic natural browsing patterns.

Websites monitor how many requests come from a single IP within a specific time frame. Exceeding these thresholds can trigger CAPTCHAs or temporary bans. Additionally, anti-bot systems are adept at spotting traffic with perfectly consistent timing - an obvious sign of automation.

"A real human user will rarely request more than 5 pages per second from the same website." - ScrapeOps

To stay under the radar, randomize your delays. For example, introduce a base delay of 2–7 seconds between requests, occasionally mixing in longer pauses. If a request fails or gets blocked, retry with exponential backoff: wait 30 seconds, then 15 minutes, and finally 6 hours. This approach mimics how a human might troubleshoot errors.

Distribute your workload across multiple worker processes or cloud functions to avoid overwhelming a single IP. Instead of scraping thousands of pages in one go, break the task into smaller batches with meaningful pauses in between. Setting your request timeout to at least 60 seconds can also give proxy services enough time to retry failed requests using different IPs.

Headers and Protocol Optimization

With rotation and request pacing sorted, the final piece is crafting requests that look like they’re coming from a real browser.

Always send standard browser headers, including User-Agent, Accept, Accept-Language, Accept-Encoding, Connection, Upgrade-Insecure-Requests, and the Sec-Fetch-* series (like Dest, Mode, and Site). Your User-Agent string must match the browser and operating system you’re emulating. For instance, using a Windows User-Agent on a Linux server is a dead giveaway.

"A request from 'python-requests/2.28.1' screams 'I'm a bot!'" - ScraperAPI

Pay attention to header order - it should replicate how browsers send them. Also, include Client Hints that align with your User-Agent.

Match the Accept-Language header to the region of your proxy (e.g., "de-DE" for a German IP). Use a logical Referer header that reflects a natural user journey, like a category page when scraping product details. When possible, opt for HTTP/2 or HTTP/3 protocols, as they align with modern site preferences. If speed and stability are priorities, consider using SOCKS5 proxies, which often outperform standard HTTP proxies in these areas.

sbb-itb-ea6392c

Data Quality and Scraper Reliability

Validate Inventory Data Fields

Keep an eye on HTTP status codes - code 429 means you've hit rate limits, while 404 often signals missing pages or advanced blocks. Set up validation rules for key fields like stock levels, timestamps, and pricing to ensure the data types are accurate.

Changes in site structure can sneak up on you. Webmasters might tweak HTML layouts or rename fields, which can quietly disrupt your scraper. Automating alerts for sudden drops in item counts or missing key fields can help catch issues early. Store successful responses as benchmarks to spot real-time changes.

It's also crucial to verify that the response's "origin" IP matches the proxy you’re using. Tools like BirdProxies provide centralized dashboards and real-time analytics, making it easier to monitor proxy performance and maintain data integrity. Scan responses for phrases like "human verification" or "security check" - these often indicate CAPTCHA barriers. To avoid scraping duplicate entries caused by mobile and desktop URL variations, rely on canonical URLs (rel="canonical" tags) to identify the primary page version.

Once you've validated your data fields, the next step is tackling dynamic data extraction.

Handle Dynamic and Structured Data

Interactive websites often load inventory data through backend APIs, typically in JSON or GraphQL formats. These structured data sources are less likely to break when HTML layouts change. That said, keep in mind that raw HTML consumes much less bandwidth than rendering a full page.

For client-side rendered sites, tools like Puppeteer or Playwright are essential. These headless browsers fully render pages before scraping. To avoid detection by anti-bot systems, use stealth libraries such as puppeteer-extra-plugin-stealth. Also, match your browser profile (e.g., "Android Phone") with your proxy type (e.g., mobile proxy) to prevent mismatched signals that could trigger anti-bot defenses.

With your data extraction methods in place, it's time to focus on error management and failover strategies.

Error Handling and Failover

Organize errors by type to respond effectively. For example, when you hit a 429 (Too Many Requests) error, rotate your IP immediately and use exponential backoff. If you encounter a 403 (Forbidden) error, switch to residential proxies and double-check your headers.

| Error Type | Detection Indicator | Recommended Strategy |

|---|---|---|

| Rate Limiting | HTTP 429 | Rotate IP; use exponential backoff |

| Access Denied | HTTP 403 | Switch to residential proxy; verify headers |

| CAPTCHA | "hcaptcha", "recaptcha" | Use AI solvers or clean residential IP |

| Timeout | Connection reset | Extend timeout to 60s; check proxy health |

Set a 60-second timeout to allow for retries. Use a tiered removal system for failing proxies: retry after 30 seconds on the first error, remove the proxy for 15 minutes after the second error, and remove it for 6 hours after the third consecutive failure. Track proxy success rates by provider, type, and location to pinpoint and resolve weak spots in your infrastructure.

Long-Term Scraping Operations

Monitor Proxy Performance

Keeping a close eye on proxy performance is essential for long-term success. Regularly track success rates by provider, proxy type, and region. This allows you to pinpoint underperforming segments of your proxy pool before they lead to major disruptions.

Pay attention to HTTP status codes. For example, a 429 status typically means you're hitting rate limits, 403 or 404 might indicate IP blocks, and 5xx errors suggest server-side issues. Sharing real-time proxy health data across all your systems can help you act quickly to resolve problems.

For image-heavy pages, monitor bandwidth usage and block nonessential assets to save on costs. Tools like BirdProxies' real-time dashboard simplify this process, helping you tweak your proxy rotation strategy as needed.

Once your monitoring setup is in place, the next priority is securing your proxy operations.

Security and Access Management

Secure access to your proxy setup by managing credentials carefully and restricting usage. Store proxy credentials in environment variables or secret management tools instead of hardcoding them. Using containerization tools like Docker can further isolate proxy credentials, ensuring that a breach in one container doesn’t compromise the rest of your system.

Limit access to your proxy pool by implementing IP whitelisting, allowing only authorized servers to connect. Regularly audit proxy headers with tools like httpbin.org/headers to ensure sensitive information - like Proxy-Authorization or X-Forwarded-For headers - isn’t unintentionally exposed.

Rotate passwords and access keys regularly - at least every three months, or monthly for more sensitive operations. Reviewing access logs frequently can help you detect unauthorized activity early. For added security, use separate credentials for different scraping projects. This way, if one set is compromised, the impact is contained.

Optimize Costs and Resources

Balancing security and cost-efficiency is key to sustaining long-term scraping operations. Consider using a proxy tiering approach: datacenter proxies for less sensitive tasks and higher-cost residential or mobile proxies for sites with strict anti-bot defenses.

Reduce costs further by implementing response caching to minimize duplicate requests. For sites where data doesn’t change often, caching can cut proxy usage by 30–50%.

BirdProxies offers flexible pricing plans, making it easier to align your needs with your budget. Their solutions can help you scale your operations efficiently.

For large-scale scraping, distribute tasks using cloud functions like AWS Lambda or Google Cloud Functions. This method not only speeds up processing but also prevents overloading any single IP range. Additionally, it provides a natural failover mechanism, ensuring smooth operations even if one region encounters proxy issues.

Conclusion

Effective inventory scraping hinges on a few key elements: clear data requirements, smart proxy choices - such as residential or ISP proxies for their high trust levels - and strategic configurations like IP rotation, realistic headers, and behavior that mimics typical user activity.

Once your setup is ready, maintaining data quality becomes the priority. This involves consistent validation and robust error handling. For example, use exponential backoff strategies to manage 403 and 429 errors, and ensure your proxy geolocation aligns with the target site's primary audience. To keep your operations running smoothly over time, monitor performance metrics closely. Tracking success rates by proxy type and region can help identify and resolve issues before they escalate.

To manage costs, assign datacenter proxies to simpler tasks and save residential or ISP proxies for more challenging targets. Additionally, implementing response caching on stable sites can cut bandwidth usage by 30–50%.

BirdProxies offers a strong solution for large-scale scraping needs, providing ISP and residential proxies with ultra-low latency (30–50 ms), unlimited bandwidth, and access to 72 million IPs across 180+ countries. Users have reported months of uninterrupted scraping and the ability to handle over 200 concurrent tasks without blocks or connection limits.

FAQs

What’s the difference between residential and datacenter proxies for inventory scraping, and how do I choose?

When deciding between residential and datacenter proxies, it all comes down to your specific scraping requirements and how you prioritize trust, speed, and cost.

- Residential proxies rely on real household IP addresses, making them highly reliable for bypassing tough anti-scraping measures like CAPTCHAs or geo-restrictions. They’re perfect for sensitive tasks such as tracking e-commerce prices or accessing inventory blocked by location. The trade-off? They’re typically more expensive and slightly slower.

- Datacenter proxies, on the other hand, are faster and more budget-friendly. These proxies originate from data centers rather than real devices, making them a solid choice for bulk scraping on websites with basic bot detection. However, they’re more likely to get blocked on platforms with stronger anti-bot defenses.

For websites with advanced security measures, residential proxies are the way to go for dependable access. But if you’re working with less secure sites and need to prioritize speed or cost, datacenter proxies are a smart choice. With BirdProxies, you have access to both types, letting you customize your approach to suit your needs perfectly.

What legal factors should I consider when scraping e-commerce websites?

Scraping e-commerce websites in the United States comes with a web of legal considerations. While collecting publicly available data like product listings and prices is generally allowed, accessing information hidden behind login walls, paywalls, or containing personal identifiers could land you in hot water with laws such as the Computer Fraud and Abuse Act (CFAA) or privacy regulations. Additionally, copying copyrighted material, like product images or reviews, may run afoul of the Digital Millennium Copyright Act (DMCA). Even ignoring a website's terms of service could result in claims of contract breach or trespass to chattels.

To navigate these legal waters more safely, keep these tips in mind:

- Stick to publicly accessible data: Avoid scraping anything protected by authentication or other access controls.

- Respect terms of service: Review and adhere to the website's stated rules, steering clear of restricted content.

- Follow copyright and privacy laws: Be extra cautious when dealing with user-generated content or any data that could contain personal information.

Since U.S. laws on web scraping vary depending on the context, consulting a legal expert before diving into large-scale projects is always a wise move.

Another way to reduce risks is by using a reliable proxy provider like BirdProxies. They offer high-quality ISP and residential proxies that mimic real user behavior, helping you avoid detection and steering clear of anti-bot defenses. Still, even with technical tools in place, seeking legal counsel to ensure compliance is essential.

What are the best practices for using proxies to scrape inventory data without getting blocked?

To scrape inventory data efficiently while minimizing the risk of being blocked, start by choosing the appropriate type of proxy. Residential proxies or ISP proxies are ideal for secure, heavily monitored sites, while datacenter proxies are better suited for faster performance on less restrictive pages. Implementing a rotating proxy system helps by regularly switching IP addresses, which reduces the chances of triggering bans.

Stick to website rate limits by controlling the number of requests per IP and introducing random delays between them. Simulate genuine browser activity by using realistic headers like User-Agent and Accept-Language, and make sure to rotate these periodically. For activities that require consistent sessions, such as logging in, use static or sticky proxies to maintain the same IP throughout the session. Regularly monitor your setup’s performance and make adjustments as necessary to ensure uninterrupted operation.